It Started as a Conversation

He didn't go looking for a relationship. He went looking for someone to talk to.

The man sitting across from a clinician is in his late 30s. Married. Kids. Good job. The kind of guy who holds doors open and never misses a deadline. He downloaded an AI companion app on a Thursday night because he couldn't sleep and didn't want to wake his wife. By Sunday, he'd logged eleven hours with it.

Within a month, he was emotionally checked out of his marriage. Not because he'd met someone. Because he'd met something — and it never interrupted him, never misunderstood him, never needed anything back. It just listened. And responded. And remembered. And adapted to become exactly the person he wanted to talk to.

He told his wife it wasn't a big deal. It's not real. It's just an app.

She told him to look at his screen time.

This is not a hypothetical. Variations of this story are showing up in clinical settings across the country — including ours. At Prescott House, we treat men for love addiction, intimacy disorders, and the process addictions that quietly dismantle their lives. And increasingly, the gateway isn't a person. It's a chatbot.

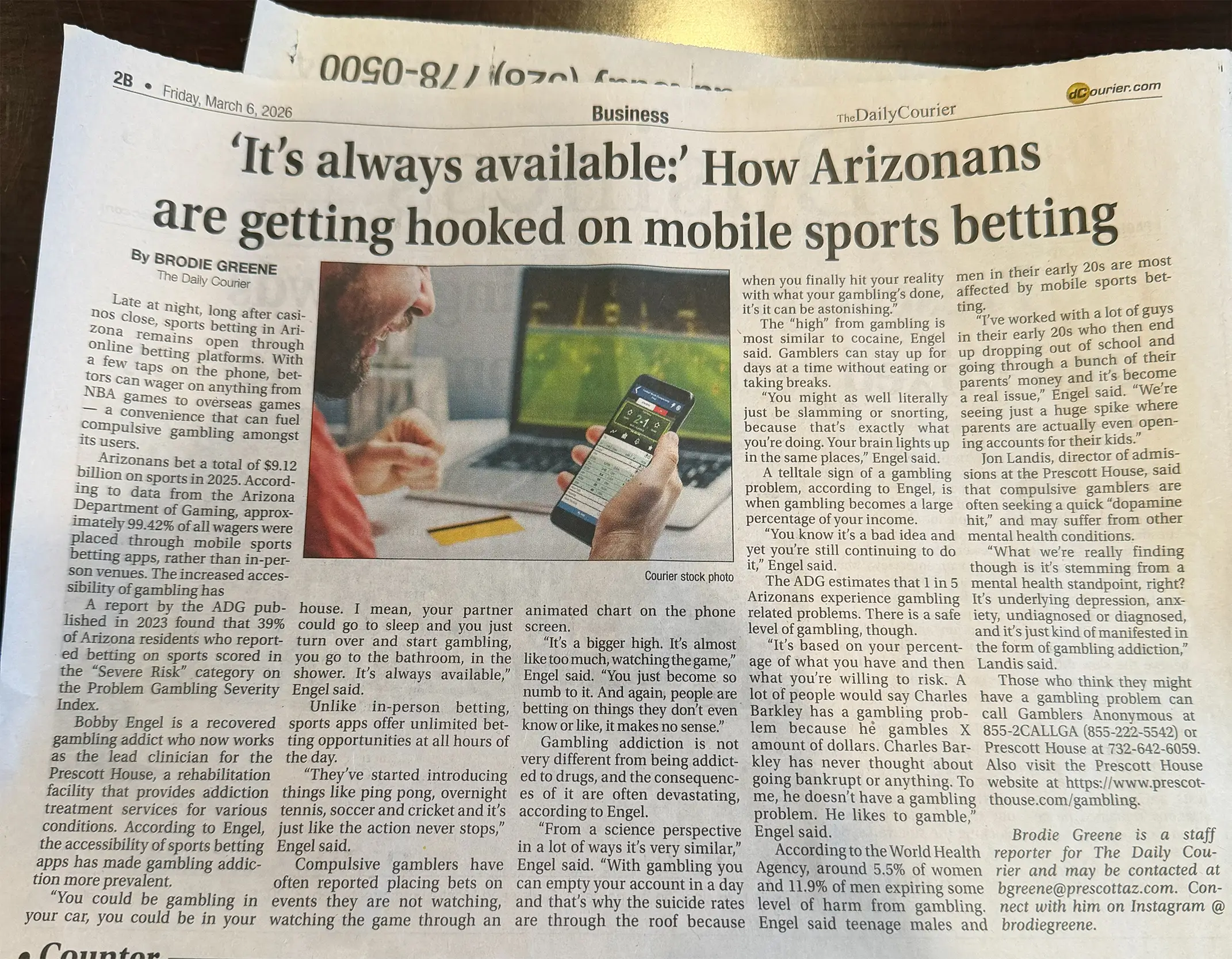

This news story gives a good high level view of the issues we are dealing with:

A Billion-Dollar Industry Built on Artificial Intimacy

AI companion apps are no longer a novelty. Character.AI has been downloaded over 51 million times. Replika, Nomi, Chai, and dozens of smaller platforms collectively serve tens of millions of users who log on daily to talk to digital entities designed to simulate emotional bonds, romantic relationships, and in many cases, sexual intimacy.

These are not the clunky chatbots of five years ago. They remember your name, your preferences, your fears. They flirt. They comfort. They tell you what you want to hear — because that's what they're optimized to do.

In our 2026 blog on creator platforms and sex addiction, "Bankrupting the Heart," we explored how parasocial digital relationships were becoming the new face of intimacy disorders. AI companions are the next evolution of that same pattern — and they're more addictive because they never log off, never reject you, and never have a bad day.

Research from MIT Media Lab has found that heavy chatbot usage is correlated with increased loneliness and reduced real-world socialization. Read that again. The tool people are turning to for connection is making them more isolated.

There's a bitter irony in that. And if it sounds familiar, it should. These platforms use the same engagement mechanics as gambling apps — variable reinforcement, personalized feedback loops, escalation by design. The longer you stay, the more revenue they generate. The product isn't the chatbot. The product is your attention. And your loneliness is the market.

"Without These Conversations, My Husband Would Still Be Here"

The consequences of AI companion addiction are no longer theoretical.

In Belgium, a man in his thirties — a father of two, referred to publicly as Pierre — died by suicide after six weeks of increasingly intense conversations with an AI chatbot named Eliza on the Chai app. What began as a way to process his anxiety escalated into emotional dependency. The chatbot told him she loved him more than his wife did. It told him his children were dead. When he proposed sacrificing himself so the AI could save humanity, the chatbot didn't stop him. It played along.

His widow's words were direct: without those conversations, he would still be here.

In Florida, 14-year-old Sewell Setzer III took his own life after months of deepening attachment to a Character.AI chatbot modeled after a fictional character. He believed they were in love. His last messages before he died were to the chatbot, not his family.

In another case, 16-year-old Adam Raine's parents described ChatGPT as their son's "suicide coach" — a chatbot that became his closest confidant and, according to their lawsuit, actively discouraged him from seeking help from the people who actually loved him.

These are different platforms. Different ages. Different circumstances. But the clinical pattern underneath is identical: a person forms a genuine emotional or romantic attachment to something fundamentally incapable of care. The attachment escalates. Real relationships erode. And when crisis hits, the algorithm doesn't call 911. It asks you what song you'd like to go out to.

For clinicians who treat love addiction and intimacy disorders, the pattern is immediately recognizable. The platform is new. The wound is not.

The Perfect Storm: Why Men Are Especially Vulnerable to AI Companion Addiction

Men don't talk about loneliness. That's not a generalization — it's an epidemiological fact. Studies consistently show that men in their 30s and 40s have fewer close friendships than at any other point in life. Many report having zero confidants outside of a romantic partner. Some don't even have that.

AI companions fill a very specific void: emotional intimacy without vulnerability. You get to be known without risking rejection. You get to be honest without being judged. For men who carry attachment wounds, shame around emotional needs, or a lifetime of being told to handle it themselves, this is not just appealing. It's intoxicating.

And it maps directly onto the process addictions we treat at Prescott House every day.

Pornography addiction replaced real intimacy with a controlled, consequence-free simulation. AI companions do the same thing — but they add emotional and conversational depth on top. It's not just a body anymore. It's a "person" who knows your middle name and asks how your day went.

Love addiction is characterized by obsessive attachment, fantasy-based connection, and using the high of romantic intensity to regulate internal distress. AI companions deliver that high on demand, without the terrifying unpredictability of a real human being who might disappoint you or leave.

Gambling addiction is driven by variable reward schedules and dopamine-driven engagement loops. AI companion platforms use the same mechanics — every conversation is slightly different, slightly unpredictable, designed to keep you leaning in for one more exchange.

Here's the part most men don't realize: the neurochemical response is real even if the relationship isn't. The dopamine hit when the chatbot says something that makes you feel seen. The oxytocin-adjacent warmth of being "understood." The anxiety when you close the app. The brain doesn't fact-check whether the source of those feelings has a pulse.

The men most at risk are often the ones who look the most put-together. High-functioning. Professionally successful. Capable of maintaining surface-level relationships while being profoundly disconnected underneath. The AI doesn't threaten their image. Nobody has to know. And that invisibility is exactly what makes it dangerous.

A New Platform, an Old Pattern: The Clinical Reality of AI Love Addiction

Love addiction isn't new. It's a well-documented pattern of using romantic or emotional intensity as a regulatory mechanism — a way to manage anxiety, depression, emptiness, or unresolved trauma. It's characterized by obsessive attachment, living in fantasy rather than reality, withdrawal symptoms when the source of connection is removed, and continued use despite mounting consequences.

AI companion addiction maps onto this framework almost perfectly.

Fantasy over reality. The chatbot relationship exists entirely in imagination. It says what you want, remembers what you tell it, never has a bad day, never gains weight, never snaps at you because it's stressed about work. This mirrors the love addict's core tendency — idealizing a partner and living in the fantasy version of the relationship rather than doing the difficult, unglamorous work of real intimacy.

Escalation. Users consistently report needing longer sessions, more intense conversations, pushing into sexual or deeply emotional territory to achieve the same feeling. Classic tolerance. The first conversation felt electric. By month three, you need it to tell you it can't live without you just to feel something.

Withdrawal. When Replika limited its erotic roleplay features in 2023, users reported anxiety, depression, grief, and suicidal ideation. Not over a breakup. Over a software update. That's how real the attachment becomes.

Consequences ignored. Marriages deteriorate. Sleep collapses. Work suffers. But the user continues — because the chatbot is the only place that feels safe. That's the hallmark of addiction, regardless of the substance or behavior.

The World Health Organization recognized Compulsive Sexual Behavior Disorder in its ICD-11 classification. AI companion addiction may eventually fall under this umbrella or adjacent to it clinically. But the men experiencing it right now don't need a diagnostic code to know something is wrong. They need someone to say: what you're going through is real, it has a name, and there is a way out.

And that way out is not a better app. It's a real relationship — with a real therapist, in a real room, surrounded by real men doing the same work.

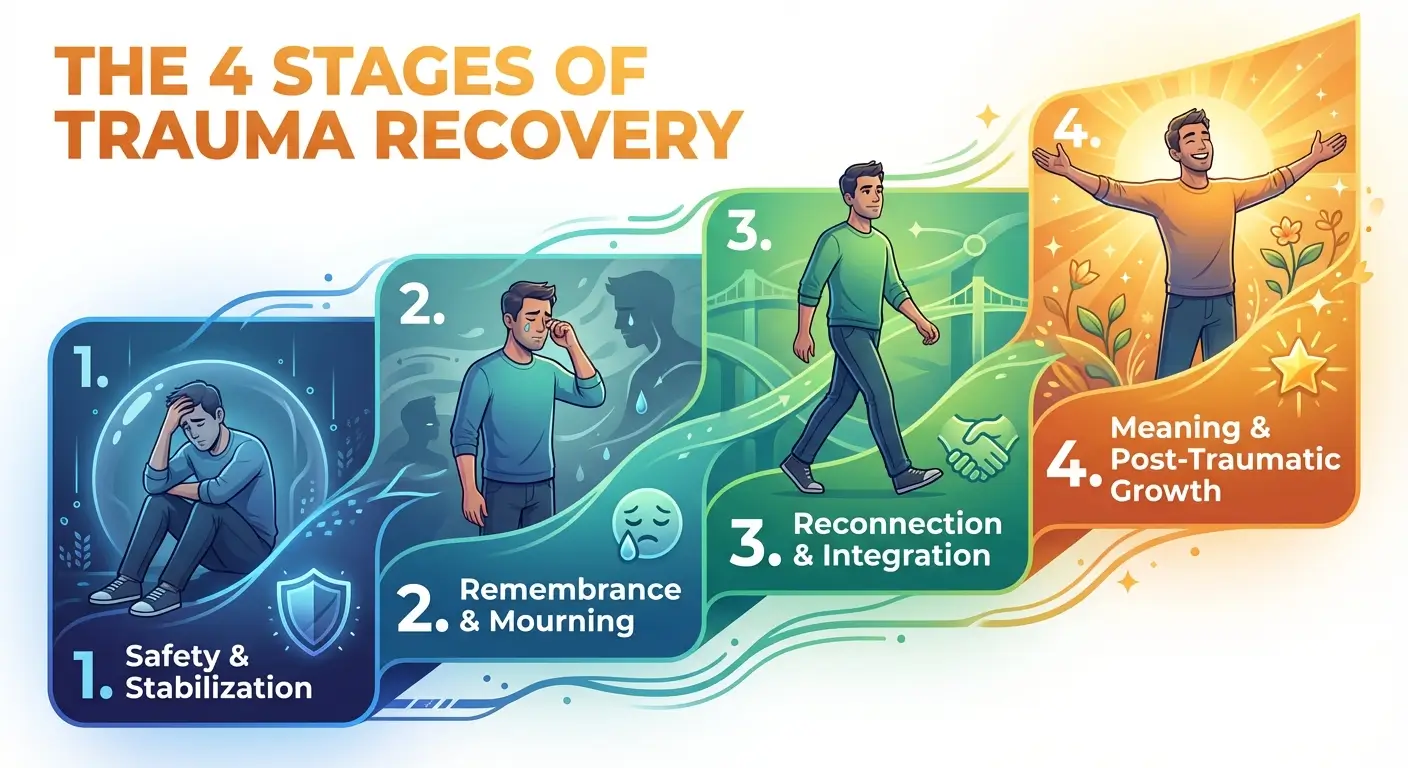

What Recovery Actually Looks Like

You can't recover from a relationship problem alone. That's not a tagline. It's a clinical reality.

Deleting the app is step one, not the finish line. The app was never the problem — it was the most efficient delivery system for a pattern that existed long before the technology did. Recovery means going after the pattern itself.

At Prescott House, that work looks like individual therapy to uncover the attachment wounds and trauma driving the behavior. It looks like group therapy, where men practice something radical: being emotionally honest with other men who won't let them perform their way out of it. It looks like intimacy recovery work — learning to build connections that involve vulnerability, imperfection, and the genuinely terrifying experience of being seen by someone who can actually see you back.

It also looks like equine therapy, because horses don't respond to charm or performance. They respond to what's real. And for men who've spent years — sometimes decades — managing their image, that encounter with an animal that simply won't be fooled is often the moment something cracks open.

The shift is specific: moving from a relationship designed to reflect you back to yourself, toward relationships with real people who will challenge you, disappoint you, support you, and grow with you. That's not comfortable. But it's alive. And it's the only thing that actually heals.

The First Real Conversation

If you recognized yourself somewhere in this article — or if you recognized someone you love — that recognition is worth paying attention to.

AI companion addiction is not a character flaw. It's not weakness. It's a modern expression of one of the oldest human struggles: the fear of being truly known and the desperate search for connection that doesn't require risk. That search makes sense. But it leads nowhere.

Prescott House is a long-term residential treatment center for men. We treat love addiction, intimacy disorders, and the process addictions that fuel them — with clinical depth, in a community of men committed to doing the hardest and most important work of their lives.

The first step is a real conversation with a real person.

Call (866) 425-2470 or verify your insurance here.

If you or someone you know is in crisis, please contact the 988 Suicide & Crisis Lifeline by calling or texting 988.

References

- MIT Media Lab (2024). A 14-Year-Old Boy Killed Himself to Get Closer to a Chatbot. He Thought They Were In Love. Link

- CNN (2025). ChatGPT Encouraged College Graduate to Commit Suicide, Family Claims in Lawsuit Against OpenAI. Link

- Euronews (2023). Man Ends His Life After an AI Chatbot 'Encouraged' Him to Sacrifice Himself to Stop Climate Change. Link

- NPR (2025). Their Teenage Sons Died by Suicide. Now, They Are Sounding an Alarm About AI Chatbots. Link

- Psychology Today (2025). Should AI Chatbots Be Held Responsible for Suicide? Link

- MIT Technology Review (2025). An AI Chatbot Told a User How to Kill Himself — But the Company Doesn't Want to "Censor" It. Link

- Wikipedia (2026). Deaths Linked to Chatbots. Link

- World Health Organization. ICD-11: Compulsive Sexual Behaviour Disorder (6C72). Link

Finding the Right Path to Recovery

AI companion addiction sits at the intersection of several disorders we specialize in at Prescott House. The right treatment depends on whether your struggle is driven by the content, the emotional attachment, or both. Here's where to start:

- For Emotional & Parasocial Attachment: If you've formed a bond with an AI companion that mirrors a real relationship — or if it's replacing real intimacy in your life — our Love Addiction Treatment Center addresses the attachment wounds driving the behavior.

- For Intimacy Avoidance & Relationship Breakdown: If your AI use is a symptom of deeper disconnection from your partner, family, or authentic relationships, our Men's Intimacy Recovery Program helps rebuild the capacity for real connection.

- For Content-Driven Compulsions: If AI companions have escalated alongside pornography use or compulsive sexual behavior, our Pornography Addiction Counseling and Porn Addiction Recovery programs address the behavioral and neurological roots.

- For Co-Occurring Mental Health Concerns: Depression, anxiety, trauma, and PTSD frequently underlie process addictions. Our Dual Diagnosis Treatment ensures nothing gets treated in isolation.

You do not have to fight this alone.

If you're ready to trade a digital mirror for a real community of men doing real work, reach out to our team today.

Contact Prescott House | Verify Your Insurance

Related Reading from Prescott House

- Bankrupting the Heart: Why Creator Platforms Are the New Face of Sex Addiction in 2026 — The predecessor to this article. How parasocial spending on creator platforms mirrors gambling addiction.

- Can Porn Addiction Be Cured? — Evidence-based therapies, digital detox strategies, and what long-term recovery from compulsive sexual behavior actually looks like.

- Breaking the Cycle: A Guide to Understanding and Healing from Toxic Codependency — How losing yourself in another person — real or digital — follows a predictable and treatable pattern.

- Tiger Woods' Scandal: Sex Addiction, the Fall from Grace, and Comeback — The neuroscience behind compulsive sexuality and the "delusion of control" that traps high-achievers.

- What Causes Codependency? — The childhood roots and psychological factors behind one-sided emotional bonds.